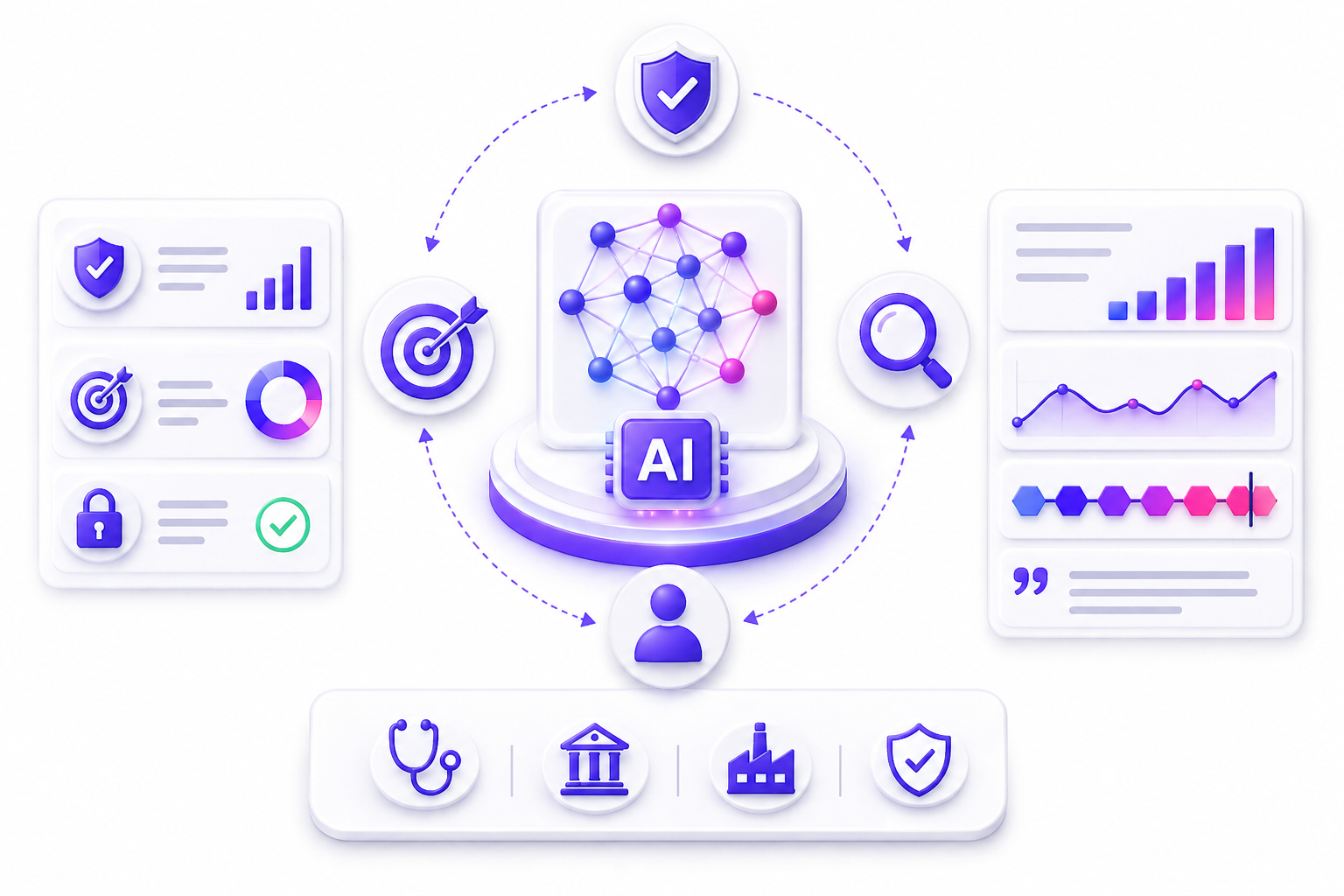

DARWIN = Data Algorithm Responsibility Workflow INfrastructure Model

Building responsible AI architectures that structure data quality, model robustness, accountability, workflow traceability, and infrastructure efficiency. DARWIN helps enterprises govern AI systems with clarity, compliance, explainability, sustainability, and audit-ready operational control.

Frameworks

Frameworks

Enterprise AI Fails When Responsible Governance Is Missing

- Data quality and lineage remain unclear

- Algorithms lack explainability and robustness

- Accountability is not clearly assigned

- AI workflows are difficult to trace

- Compliance evidence is scattered across teams

- Infrastructure cost and sustainability are unmanaged

Responsible AI Framework

Responsible AI Framework

Five DARWIN Pillars That Turn AI Governance Into Enterprise

A structured model to assess data, algorithms, responsibility, workflows, and infrastructure so AI systems remain trusted, explainable, compliant, and scalable.

01

Data Quality, Fairness & Lineage

Ensure every AI initiative starts with governed, reliable, and bias-aware data foundations before models are trained or deployed.

- Validate data quality, diversity, and completeness

- Track lineage, provenance, consent, and usage rights

- Reduce bias through structured review checkpoints

02

Algorithm Robustness & Explainability

Evaluate model design, performance, safety, and transparency so AI outputs are reliable, explainable, and aligned to business intent.

- Test model robustness, accuracy, and safety behavior

- Improve explainability for high-impact decisions

- Align algorithms with domain-specific goals

03

Responsibility, Compliance & Accountability

Define who owns AI outcomes, how risks are governed, and how ethical, legal, and stakeholder responsibilities are documented.

- Assign ownership for decisions and escalation paths

- Map compliance needs across legal and risk teams

- Improve transparency, communication, and trust

04

Workflow Traceability & Human Oversight

Structure the full AI lifecycle from data to deployment with clear checkpoints, human-in-the-loop controls, and audit-ready documentation.

- Document lifecycle steps from modeling to monitoring

- Build traceable workflows with versioned checkpoints

- Enable oversight, incident response, and review loops

05

Infrastructure Efficiency & Sustainability

Optimize compute, deployment, cost, runtime resilience, and environmental impact so enterprise AI scales responsibly and efficiently.

- Assess cloud, on-premise, or hybrid infrastructure fit

- Monitor cost, performance, energy use, and emissions

- Improve reliability, scalability, and sustainable operation

Frameworks

Frameworks

Designed for Responsible AI Across Industries

Pharmaceutical & Life Sciences

Govern clinical, regulatory, safety, quality, and evidence-driven AI systems with stronger traceability, accountability, and compliance readiness.

Investment, Venture & Portfolio

Support research, diligence, portfolio review, scenario modeling, risk assessment, and explainable investment intelligence workflows.

Finance & Banking

Built for risk review, compliance, financial analysis, model governance, audit readiness, operations, and banking decision controls.

Engineering & Construction

Strengthens project controls, procurement checks, technical reviews, risk tracking, workflow traceability, and execution governance.

Healthcare Delivery

Helps govern care operations, clinical coordination, patient workflows, compliance controls, service quality, and decision support systems.

Tech, Media & Telecom

Supports product, platform, service, customer intelligence, digital operations, model monitoring, and fast-changing AI workflows.

Manufacturing & Industrial

Applies to plant operations, quality systems, maintenance, process control, safety workflows, industrial analytics, and AI governance

Retail & Consumer Goods

Enables governed models for merchandising, demand signals, supply chains, customer operations, retail workflows, and service decisions.

Education & Research

Useful for learning systems, academic operations, research synthesis, knowledge governance, evidence mapping, and responsible AI use.

What DARWIN Enables

What DARWIN Enables

Turning Responsible AI Principles Into Governed Execution

DARWIN helps enterprises move from AI experimentation to structured governance by clarifying data controls, model behavior, accountability, workflow traceability, and infrastructure readiness.

Trusted Data Governance

Define data quality, lineage, consent, bias checks, and access controls before AI systems move into training, testing, or production.

Explainable Model Control

Assess model robustness, safety, explainability, and alignment so algorithmic outputs can be reviewed, trusted, and improved.

Clear Accountability

Map ownership, legal responsibility, stakeholder communication, and escalation paths so AI outcomes are not left unowned.

Traceable AI Workflows

Document lifecycle steps from data to modeling, deployment, monitoring, review, and incident response with audit-ready checkpoints.

Sustainable Infrastructure

Evaluate compute choices, cost efficiency, runtime reliability, energy use, and environmental impact for responsible AI scaling.

FAQ’s

FAQ’s

Need Help? We’ve Got You Covered.

Find clear answers on how DARWIN helps enterprises govern AI systems, manage risk, improve explainability, and build audit-ready responsible AI practices.

DARWIN stands for Data, Algorithm, Responsibility, Workflow, and INfrastructure. It is a Responsible AI framework that helps enterprises design, assess, govern, and continuously improve trustworthy AI systems.

DARWIN helps enterprises reduce ethical, regulatory, operational, and technical risks by turning Responsible AI principles into practical governance actions, metrics, documentation, and review models.

The five pillars are Data, Algorithm, Responsibility, Workflow, and INfrastructure. Together, they cover data quality, model robustness, accountability, lifecycle traceability, and infrastructure efficiency.

DARWIN creates a structured governance model for AI systems by defining controls around data lineage, algorithm behavior, ownership, compliance, workflow checkpoints, and monitoring readiness.

DARWIN is useful for AI/ML teams, data scientists, compliance teams, legal teams, risk officers, infrastructure teams, and executive stakeholders responsible for trusted AI adoption.

DARWIN improves audit readiness by documenting AI lifecycle steps, ownership, risk controls, model evaluation, data usage, human oversight, and compliance evidence from the start.

Yes. DARWIN can support regulated and high-impact industries such as finance, healthcare, life sciences, manufacturing, public sector, technology, insurance, and enterprise operations where AI trust and control matter.

US Office address

50 Division Street, Suite 501,

Somerville, NJ 08876 , US

Legal & Support

Tekframeworks

@ Tekframeworks. All rights reserved.

- Image

- SKU

- Rating

- Price

- Stock

- Availability

- Add to cart

- Description

- Content

- Weight

- Dimensions

- Additional information